Utah's AI bill is everything David Sacks asked for. He still wants it dead.

The aggressive campaign against HB 286 contradicts what the White House AI advisor has said about his policy goals.

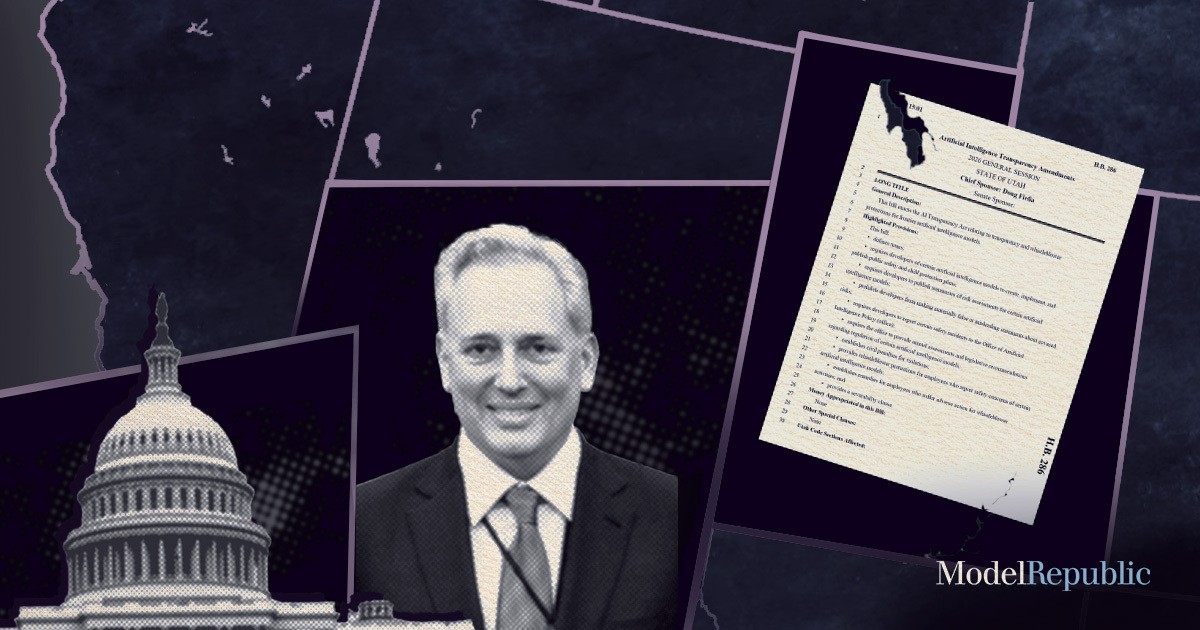

Members of the Trump administration are trying to kill an AI bill in Utah. On Feb. 12, the White House Office of Intergovernmental Affairs sent a letter to the state’s Senate Majority Leader saying they were “categorically opposed to Utah HB 286 and view it as an unfixable bill that goes against the Administration's AI Agenda,” as reported by Axios. Not only that, but White House officials have also had multiple conversations with the bill’s sponsor — Republican state Rep. Doug Fiefia — in the last few weeks. They’ve been urging him to drop it, saying there are no changes he could make that would make them happy. This isn’t the only state bill the White House has targeted recently; they’ve also contacted Florida legislators about opposing Gov. Ron DeSantis’ AI Bill of Rights.

This effort is a continuation of a crusade by David Sacks, Trump’s Special Advisor for AI and Crypto, to take down state AI laws. Sacks, who’s also a tech investor, was the main advocate and author behind Trump’s recent executive order going after these laws by directing the Attorney General to challenge them in court and threatening to cut state broadband funding. Sacks says that his aim is to avoid a “patchwork of 50 different regulatory regimes” on AI that will “stymie innovation, especially by small startups.” He’s also said that he’s not going after all state laws — “kid safety, we're going to protect. We're not pushing back on that” — but rather “the most onerous examples of state regulations.”

But what is actually in this Utah bill, HB 286, also known as the Artificial Intelligence Transparency Act? In reality, it's a light-touch bill that closely follows laws already passed in other states, applies only to the largest AI companies, explicitly paves the way for a federal AI framework and takes steps to protect child safety. The fact that Sacks is nonetheless trying to kill the bill and condemning it as irredeemable flies in the face of nearly everything he’s said about his AI agenda.

Utah’s bill goes out of its way to avoid a “patchwork” of rules

Much of what HB 286 does is not new. The bill largely mirrors California’s SB 53, an AI bill that passed last year. Both apply to AI developers with over $500 million in gross annual revenue — hardly small startups. Both require these companies to publish a safety plan addressing catastrophic risks from their most powerful AI models and comply with that plan. The bills don’t specify what safety measures these companies have to take — only that they have to follow the rules that they set for themselves. As we’ve previously found, Google and OpenAI are already failing to do this with respect to SB 53. Both bills would also penalize AI companies for making certain false or misleading statements about these risks, require them to report certain safety incidents to their respective state agencies, and protect whistleblowers who speak out about safety concerns. There are some differences between the bills in these areas; for example, HB 286’s whistleblower protections are broader than SB 53’s. But other details are kept the same, like the required timelines for incident reporting.

HB 286 isn’t the only state AI bill that’s followed the lead of SB 53. The RAISE Act, a bill that was passed in New York at the end of last year, also closely follows SB 53, including in its provisions on safety plan disclosures and incident reporting. While the RAISE Act originally contained stricter requirements for AI developers, New York Gov. Kathy Hochul negotiated with the bill’s authors to get a final version that was closer to SB 53. Sacks even spoke positively of Hochul for her efforts in changing the bill to be closer to SB 53, citing it as an example of a governor trying to avoid a patchwork of different state regulations. In Utah, Fiefia has done something similar with HB 286, modeling his bill after the standard set by SB 53. Yet instead of applauding him for adhering to an existing model, Sacks is trying to kill the bill.

SB 53 was itself a compromise. It was passed after California Gov. Gavin Newsom vetoed SB 1047, a more expansive AI safety bill that would have allowed the state Attorney General to sue AI companies for violations and required AI companies to build a shutdown mechanism into their models. By the time SB 53 was signed, AI companies were starting to get on board. It had been endorsed by Anthropic, and Meta called it a “positive step” in the direction of “balanced AI regulation.” In an effort to address concerns about a patchwork of different state AI laws, SB 53 went so far as to allow California authorities to designate a federal law to supersede and take the place of its own incident reporting requirements. OpenAI received this positively, saying it was “really pleased” with the provision, as did people like Dean Ball, who helped write America’s AI Action Plan. HB 286 has a similar mechanism that allows for harmonization with federal incident reporting rules.

If Sacks were truly concerned about state regulations stymieing innovation, you’d think he’d give Fiefia some credit for modeling his bill on a framework that industry leaders like Anthropic, Meta and OpenAI have spoken positively of. And if he were worried about a patchwork, he’d welcome the fact that Fiefia’s bill makes explicit efforts to harmonize itself with federal legislation. But this couldn’t be further from his response.

Not even child safety is safe

The main area where HB 286 goes beyond SB 53 is child safety. In addition to requiring that leading AI companies publish their safety plan for catastrophic risks, it also requires that these companies publish a plan for how they intend to address potential risks to minors from their chatbots. The plan only applies to chatbots that have at least 1 million monthly users, and only applies to chatbot behaviors that could lead to “death or bodily injury” or “severe emotional distress.” These rules follow the same relatively hands-off approach of the catastrophic risk rules: AI companies simply have to say what their plan is to address serious risks to minors, and then adhere to their plan.

In the wake of a number of high-profile cases of chatbots advising young people on committing suicide, there is broad public concern. In polling from the Institute for Family Studies, 90 percent of Americans want Congress to prioritize addressing chatbot harms to minors over preempting state AI laws, including 89 percent of Trump supporters. By similar margins, Americans think that families should be granted the right to sue AI companies for severe harms to children, a measure that goes well beyond anything in HB 286. Sacks, for his part, has acknowledged these concerns. In addition to his public statements about not pushing back on child safety, Trump’s AI executive order explicitly says that Sacks will instruct Congress not to preempt state child safety laws.

And yet Sacks now seems determined to shut down Utah legislators’ attempts to address chatbot risks to minors, despite the bill’s light-touch approach. Not even that part of HB 286 is considered salvageable.

Even Utah's own Republican governor appears to be pushing back. Spencer Cox, speaking at Politico's 2026 Governor’s Summit a week after the White House sent its letter to Utah legislators, said that the states must have a role in regulating AI, including child safety.

What this all says about Sacks’ AI agenda

HB 286 is only one of many state AI bills that have been introduced, and it is far from the most restrictive. If Sacks and his allies were serious about their commitment to going after the most onerous state AI measures, they might consider looking at bills like Tennessee's SB 1493, which makes it a felony to train an AI model to provide emotional support to a user (though it’s hard to imagine that particular bill passing).

The fact that Sacks and his allies have nonetheless decided to go after HB 286, and consider it “unfixable,” says a lot about what their AI agenda actually is. It’s not about going after the most onerous state AI bills, but going after any and all of them. Proactive efforts by state legislators to avoid contributing to a patchwork of different regulations won’t spare their bills from being targeted. Nor will exempting smaller AI companies and startups. Child safety bills aren’t safe either.

When Sacks and his industry allies take up the AI preemption fight again in Congress, this is also the agenda we should expect them to push. Even some members of the Trump administration think he’s going too far, with Politico reporting “frustration” at multiple agencies over his efforts to ram his agenda through. But key Democrats in Congress are still signaling that they might be willing to work with Republican leadership on AI legislation. If Sacks and the AI industry have their way, that will include overriding as many state laws as possible.

This will hide itself!