OpenAI made a Pentagon deal overnight. Did anyone ask their safety committee?

A 24-hour Pentagon deal tested a key safeguard OpenAI promised would survive its pivot to for-profit.

By

Tyler Johnston

-

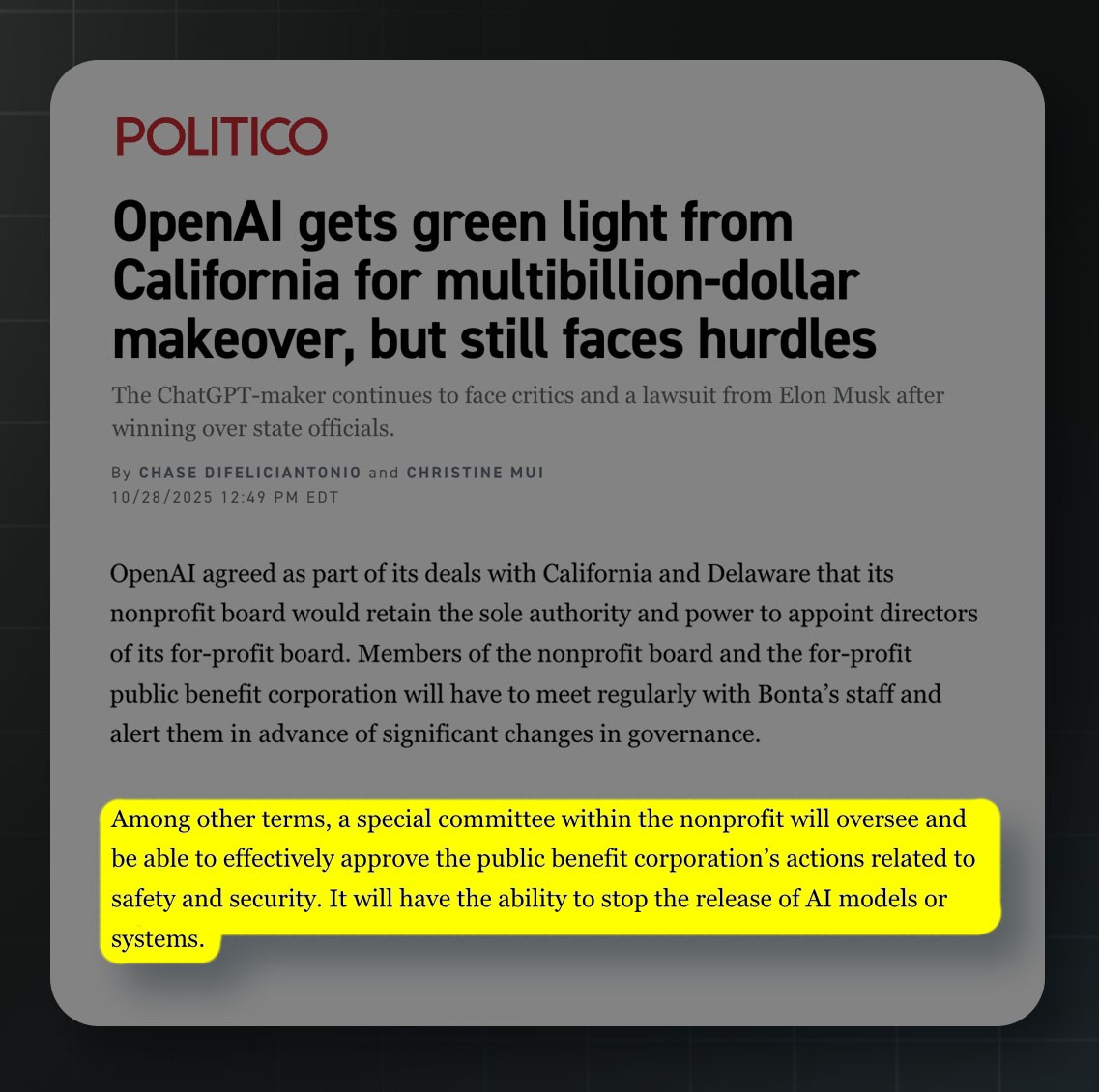

When the California and Delaware Attorneys General agreed not to contest OpenAI’s restructuring from a nonprofit into a for-profit last October, one of the most important concessions their offices secured was a requirement that safety and security decisions at the new for-profit public benefit corporation (PBC) be made with a sole fiduciary duty to OpenAI’s charitable mission (i.e., with no consideration for the commercial interests of the organization).

Under the memorandum of understanding (MOU) signed between California and OpenAI, the PBC board may not consider shareholder interests when making safety and security decisions, which are broadly defined to include model deployment decisions. The board exercises this authority through a Safety and Security Committee (SSC) housed at the nonprofit organization and chaired by Zico Kolter, a Carnegie Mellon professor who sits exclusively on the nonprofit board.

In other words, safety-related decisions about when, where, and how OpenAI models are deployed in the world are ultimately the purview of the SSC, a four-person committee tasked with making such decisions without any consideration for OpenAI’s commercial interests. This arrangement, in the Attorney General’s eyes, was the mechanism through which the public interest would survive the restructuring.

Last week, four months after this restructuring was made official, the company struck a controversial deal with the Pentagon to deploy its models in classified military environments without the hard red lines on mass surveillance and autonomous lethal weapons that had sunk negotiations between the Department of War and Anthropic, an OpenAI competitor.

The deal came together at extraordinary speed. On Thursday evening, after Anthropic first announced that they didn’t intend to sign a deal, Altman sent an internal memo telling employees that OpenAI was pursuing an agreement with the Pentagon to deploy OpenAI’s models, instead of Anthropic’s, on classified military networks. On Friday afternoon, he told staff at an all-hands that a deal was taking shape, with OpenAI’s Head of National Security Partnerships Katrina Mulligan and Head of National Security Policy Sasha Baker presenting alongside him. That same Friday evening, Trump said he had banned Anthropic from all federal agencies, and Defense Secretary Pete Hegseth said he had designated the company a supply chain risk. Hours later, late on Friday night, Altman announced on X that OpenAI’s deal was done. The entire sequence, from the first internal discussion of a potential OpenAI deal to the final public announcement, took roughly 24 hours. Altman himself later called it “definitely rushed” and admitted it “looked opportunistic and sloppy.”

The key issue looming over this saga is the potential enablement of mass surveillance and autonomous weapons using OpenAI models. This seems like a clear example of the type of safety-relevant decision that the SSC should be making — insulated from the financial incentives of the new contract, or the financial disincentives that would come with joining Anthropic in being labeled a supply chain risk (at a minimum risking revenue from their previous $200 million contract with the Pentagon, although far more could be at stake if government vendors get spooked by the decision and turn to other options).

Neither OpenAI nor any member of the SSC has said publicly that the nonprofit committee was consulted before the deal was announced. Instead, the spokespeople who have defended the deal both publicly and internally have been PBC staff (Altman, Mulligan, Baker, and safety researcher Boaz Barak). The SSC chair and two of its other three members seem to have said nothing. Retired General Paul Nakasone, the fourth member of the SSC, did address the situation the following Monday, but not in such a way that suggested ownership of the decision, or even detailed knowledge of its specifics. According to Axios, he said the country needed all major AI companies, including Anthropic, working with the government. He called Anthropic’s supply chain risk designation “not right” and the broader situation “not a good space for our nation.” He called on lawmakers to think critically about monitoring the use of AI in the military. But, overall, he described the weekend’s fallout, after the deal had been made, as something he witnessed rather than something he shaped: “The discussions over the weekend and the tenor of those discussions were tough for me to listen to.” Axios’ reporting of the event, the most detailed public account, contains no indication that Nakasone was involved in the decision-making that led to the “rushed” and seemingly “opportunistic” deal that came about on Friday.

Whether the SSC would have reached a different conclusion depends on what you think a mission-first review would have flagged — and whether financial motivations may have been weighing on the PBC. OpenAI’s deal arrived at the precise moment when a competitor was being driven out of the classified AI market, creating a commercial opportunity for the firm, but also with the implicit threat of losing an unbounded amount of revenue if OpenAI were similarly deemed a supply chain risk (the designation Anthropic was faced with after refusing the contract, which could have jeopardized its work with other government vendors). An SSC considering only the mission might well have agreed to proceed, but it also might have insisted on the stronger safeguards that were patched in three days later.

In practice, the closest thing to safety oversight this deal received came not from the SSC but from OpenAI’s own rank-and-file employees. 100 OpenAI employees signed a letter asking leadership to “continue to refuse the Department of War’s current demands for permission to use our models for domestic mass surveillance and autonomously killing people without human oversight.” Research scientist Aidan McLaughlin said, “I personally don’t think this deal was worth it.” Alignment researcher Leo Gao called the original contract language “obviously just ‘all lawful use’ followed by a bunch of stuff that is not really operative except as window dressing.”

We really can’t know, from the outside, whether the SSC was or was not consulted. Board committees deliberate privately, and Nakasone’s comments weren’t altogether inconsistent with having played a more active role in the negotiation. But the weight of circumstantial evidence runs in one direction: the compressed timeline, the fact that nearly every person involved in publicly defending the deal was a PBC employee, the complete silence from the SSC chair and three of its four members, the framing of Nakasone’s public remarks, the contract deficiencies that required post-hoc patching, the admission by Sam Altman that the deal was “definitely rushed” and appeared “opportunistic and sloppy,” and the absence of any reference to the SSC or the nonprofit board in OpenAI’s extensive public communications about the deal (all of which were primarily attempting to reassure people that they had handled this responsibly) collectively point toward the hypothesis that this decision was made hastily by the PBC without real engagement from the SSC beyond a simple heads-up.

The point of raising this is not to relitigate a deal that has already been struck. The terms have been agreed upon, the contract will proceed, and while reasonable people can disagree about whether OpenAI should have engaged with the Pentagon at all, de-escalation seems to be in everyone’s interest at this point.

What matters now is what happens next time. The Not for Private Gain coalition warned in November that it is unrealistic “to expect part-time directors alone to effectively exercise that power over a company with thousands of employees working at the frontier of a rapidly changing technology.” That warning looks prescient. If the SSC is going to function as something more than a paper commitment, it needs staffing, communication channels, and the institutional expectation that it will be in the room when decisions of this magnitude are being made.

If they haven’t already, California and Delaware ought to be asking serious questions about this saga and whether the safeguards that OpenAI promised them are holding up under their first real test. Because now, the question for the Attorneys General, the nonprofit board, and OpenAI at large is whether they will be ready for the next one.

This will hide itself!